Migrate classic processing rules to OpenPipeline

- Latest Dynatrace

- Upgrade guide

- 8-min read

- Published Mar 31, 2026

This article explains how you can manually migrate existing classic processing rules for logs and business events to OpenPipeline. It considers permission management and routing so that teams can get started with processing in OpenPipeline independently.

Why migrate?

- Unified processing model: Manage processing for multiple signal types in OpenPipeline, including logs, business events, security events, spans, and more.

- Scalable data handling: OpenPipeline handles high throughput at scale, supporting additional ingest sources and increased data volume.

- Grail data flow: Processing is Grail-based only, providing a consistent approach from ingest through DQL processing to storage.

- Pipeline groups: Enforce global processing while enabling teams to process their data independently and safely. For example, use pipeline groups for masking and permissions.

- Granular ownership: With OpenPipeline scoped ownership and access to pipelines, teams can manage day‑to‑day processing, and administrators can retain control.

What is new?

-

The following table summarizes the key technical differences of processing logs via the log classic pipeline and OpenPipeline.

Technical point Log classic pipeline OpenPipeline Data type support

StringString,Number, andBooleanContent field limit

512 kB

10 MB

Field name case sensitivity

Case-insensitive

Case-sensitive1

Connect log data to traces

Built-in rules

Automatic2

Technology parsers

Built-in rules

Preset bundles with broader technology support

Query language

LQL, DQL

DQL3

Metric dimension naming

No

Yes

Metric-key

logprefixMandatory

Optional

1When you ingest logs via Log Monitoring API v2 - POST ingest logs, field names are automatically converted to lowercase after data is routed to the Classic pipeline.

2The enrichment is done automatically, without requiring any user interaction.

What will you do?

You'll identify the data sets processed by classic processing rules and the users responsible for this data. Based on this information, you'll create

- New policies to grant users access to pipelines

- Pipelines and routes for data processing in OpenPipeline

Finally, you can disable classic processing rules.

Before you begin

Prerequisites

-

Dynatrace version 1.295+

-

Dynatrace SaaS environment powered by Grail and AppEngine

-

DPS license with log or business event capabilities

-

Permissions:

openpipeline:configurations:writesettings:objects:admin

Prior knowledge

- Familiarity with classic processing rules for log or business event

- Basic understanding of DQL

- Knowledge of pipeline access control

New concepts

- Pipeline

Collection of processors executed in an ordered sequence of stages to structure, separate, and store data.

- Processor

Pre-formatted processing instruction that focuses either on modifying or extracting data. It contains a configurable matcher and processing definition.

- Pipeline group

Set of team-managed pipelines to which a shared configuration applies. The shared configuration can restrict or mandate processing, enabling centralized processing across multiple pipelines.

- Routing

Directing data to a pipeline, either based on matching conditions (dynamic) or by explicit pipeline selection (static).

How to migrate

To migrate classic processing rules to OpenPipeline

1. Identify the data sets currently processed by classic pipelines

- Go to

Settings > Process and contextualize > OpenPipeline and select your configuration scope (Logs or Business events).

Settings > Process and contextualize > OpenPipeline and select your configuration scope (Logs or Business events). - Go to Pipelines > Classic pipeline to view your classic processing rules.

- Work on one data stream at a time to reduce risk and simplify validation. For each rule, perform the following actions:

- Learn the matcher expressions and processor definitions. If sample data is used, export it for reuse during testing.

- Identify downstream consumers such as dashboards, alerts, metrics, and automations to understand potential impact.

- Identify the users or teams responsible for that data.

2. Create one or more pipelines per team

-

In the OpenPipeline configuration scope, go to Pipelines > Pipeline to create a new custom pipeline.

-

To convert the classic processing rules to OpenPipeline processors and stages,

-

Choose which processors to adopt. Each processor has its own configuration.

While the DQL processor can replace most classic use cases, prefer specialized processors where available, as they provide a more efficient approach to processing.

-

Define the processor.

- You can reuse the Matcher and the Sample data from the classic processing rule.

- Convert the classic processing rule statement.

-

-

Make sure to test configurations using sample data.

-

Once you're satisfied with the result, select Save.

Your new pipeline is added to the table. The pipeline will take effect only once data is routed to it.

3. Configure routing to forward matching data to the new pipeline

- Go to Dynamic routing > Dynamic route to create a new route.

- Enter a matching condition for the route and choose the target pipeline.

- Select Add. The new route is added to the table and set to active by default.

- Position the route according to its priority, as the route order is relevant, and the first matching definition is applied.

- Optional You can deactivate the route before saving your changes to the table. For example, you can add a new deactivated route and activate it only after the new pipeline configuration is complete and validated.

- Select Save.

When the route is set to active, data that matches the condition gets routed to the pipeline you created and is processed accordingly, instead of the classic pipeline. You can verify processing results in  Notebooks.

Notebooks.

Keep classic rules enabled until all data is reliably routed and processed by OpenPipeline.

4. Create policies that grant scoped access to pipelines

- Go to Account Management > Identity and Access Management.

- To grant users access to pipelines, create new policies with

settings:objects:readandsettings:objects:writepermissions scoped to OpenPipeline schemas for log and business event pipelines.

You can start simple and create one policy per configuration scope (logs or business events).

Example:

ALLOW settings:objects:write WHERE settings:schemaId IN ("builtin:openpipeline.user.logs.pipelines", "builtin:openpipeline.business.events.pipelines")

Platform administrators typically retain settings:objects:admin, which grants access to all schemas, including routing.

For more information on OpenPipeline schemas, see the Settings API for each configuration scope:

- Routing (

builtin:openpipeline.<configuration.scope>.routing) - Pipelines (

builtin:openpipeline.<configuration.scope>.pipelines) - Ingest sources (

builtin:openpipeline.<configuration.scope>.ingest-sources)

View OpenPipeline Settings API schemas

- builtin:openpipeline.bizevents.ingest-sources

- builtin:openpipeline.bizevents.pipelines

- builtin:openpipeline.bizevents.routing

- builtin:openpipeline.davis.events.ingest-sources

- builtin:openpipeline.davis.events.pipelines

- builtin:openpipeline.davis.events.routing

- builtin:openpipeline.davis.problems.ingest-sources

- builtin:openpipeline.davis.problems.pipelines

- builtin:openpipeline.davis.problems.routing

- builtin:openpipeline.events.ingest-sources

- builtin:openpipeline.events.pipelines

- builtin:openpipeline.events.routing

- builtin:openpipeline.events.sdlc.ingest-sources

- builtin:openpipeline.events.sdlc.pipelines

- builtin:openpipeline.events.sdlc.routing

- builtin:openpipeline.events.security.ingest-sources

- builtin:openpipeline.events.security.pipelines

- builtin:openpipeline.events.security.routing

- builtin:openpipeline.logs.ingest-sources

- builtin:openpipeline.logs.pipelines

- builtin:openpipeline.logs.routing

- builtin:openpipeline.metrics.ingest-sources

- builtin:openpipeline.metrics.pipelines

- builtin:openpipeline.metrics.routing

- builtin:openpipeline.security.events.ingest-sources

- builtin:openpipeline.security.events.pipelines

- builtin:openpipeline.security.events.routing

- builtin:openpipeline.spans.ingest-sources

- builtin:openpipeline.spans.pipelines

- builtin:openpipeline.spans.routing

- builtin:openpipeline.system.events.ingest-sources

- builtin:openpipeline.system.events.pipelines

- builtin:openpipeline.system.events.routing

- builtin:openpipeline.user.events.ingest-sources

- builtin:openpipeline.user.events.pipelines

- builtin:openpipeline.user.events.routing

- builtin:openpipeline.user.sessions.ingest-sources

- builtin:openpipeline.user.sessions.pipelines

- builtin:openpipeline.user.sessions.routing

5. Disable the corresponding classic processing rules

After validating results, you can disable the classic processing rules that you migrated to OpenPipeline.

- Go to

Settings > Process and contextualize > OpenPipeline and select your configuration scope (Logs or Business events).

Settings > Process and contextualize > OpenPipeline and select your configuration scope (Logs or Business events). - Go to Pipelines > Classic pipeline to view your classic processing rules.

- Turn off the classic processing rule.

Learn more

-

Data flow in OpenPipeline: The end‑to‑end path data follows from ingest through storage.

-

Processing in OpenPipeline: Pipelines, stages, and processors used to transform data.

-

Owner-based access control in OpenPipeline: Policies and scopes that manage pipeline access level and ownership.

-

OpenPipeline pipeline groups: Group setup for shared and enforced pipeline stages.

-

Configure a processing pipeline: Step‑by‑step pipeline configuration guidance.

-

OpenPipeline processing examples: Examples of OpenPipeline processor configuration that can be compared with the log processing examples to clarify conceptual differences.

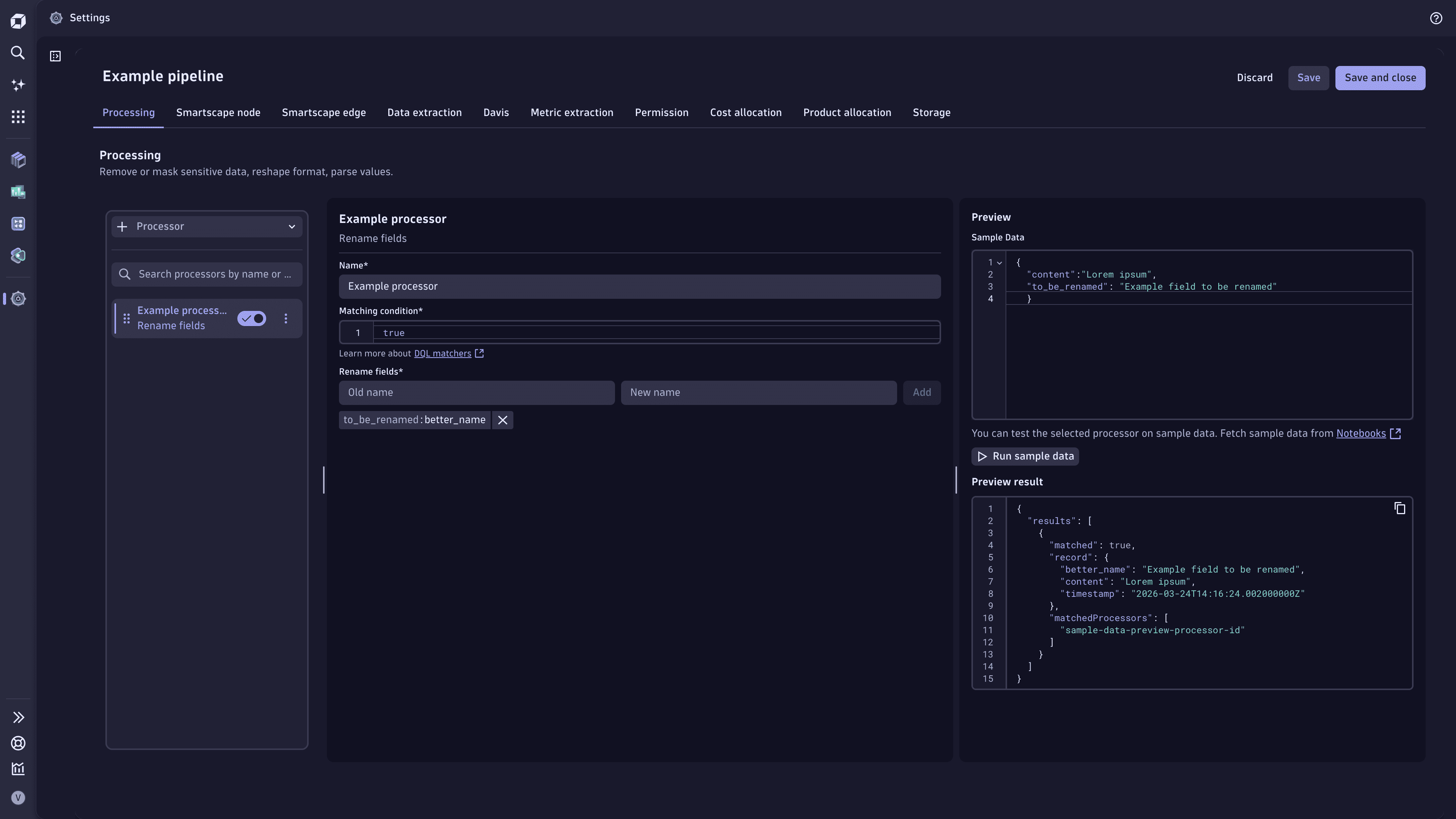

Example — Rename attributes

Classic pipeline

USING(INOUT to_be_renamed, content)| FIELDS_RENAME(better_name: to_be_renamed)OpenPipeline

Rename fields processor: Enter the field name that you want to be renamed and the new name.

Example processor that renames fields in OpenPipeline

Example processor that renames fields in OpenPipeline

FAQ

Can classic pipelines and OpenPipeline run side by side?

Yes.

Data is processed according to the first matching route. As long as the classic processing rules are in place in your environment, the classic pipeline is accounted for in OpenPipeline and is the default processing mechanism. When you create new pipelines and associated routes, position the new route above the default route so that OpenPipeline processes data accordingly. If some data doesn't match the new route condition, it's still routed by the default route to the classic pipeline.

Can I enforce consistent processing?

Yes.

- You can set a route to match all data and position it higher. OpenPipeline processes all data according to the specified pipeline first.

- You can set up pipeline groups to enforce and restrict processing, for example, for bucket assignment and permissions, while still allowing teams to manage their own processing logic.