Terms and concepts about AI Observability and GenAI in Dynatrace

- Latest Dynatrace

- Explanation

- 3-min read

- Published Nov 28, 2023

Dynatrace supports GenAI Observability by troubleshooting conversation issues, performance, costs, and correlating data throughout the product for effectively resolving issues in Large Language Models (LLMs) or traditional Machine Learning (ML).

This page provides definitions and explanations of the key terms used in our documentation.

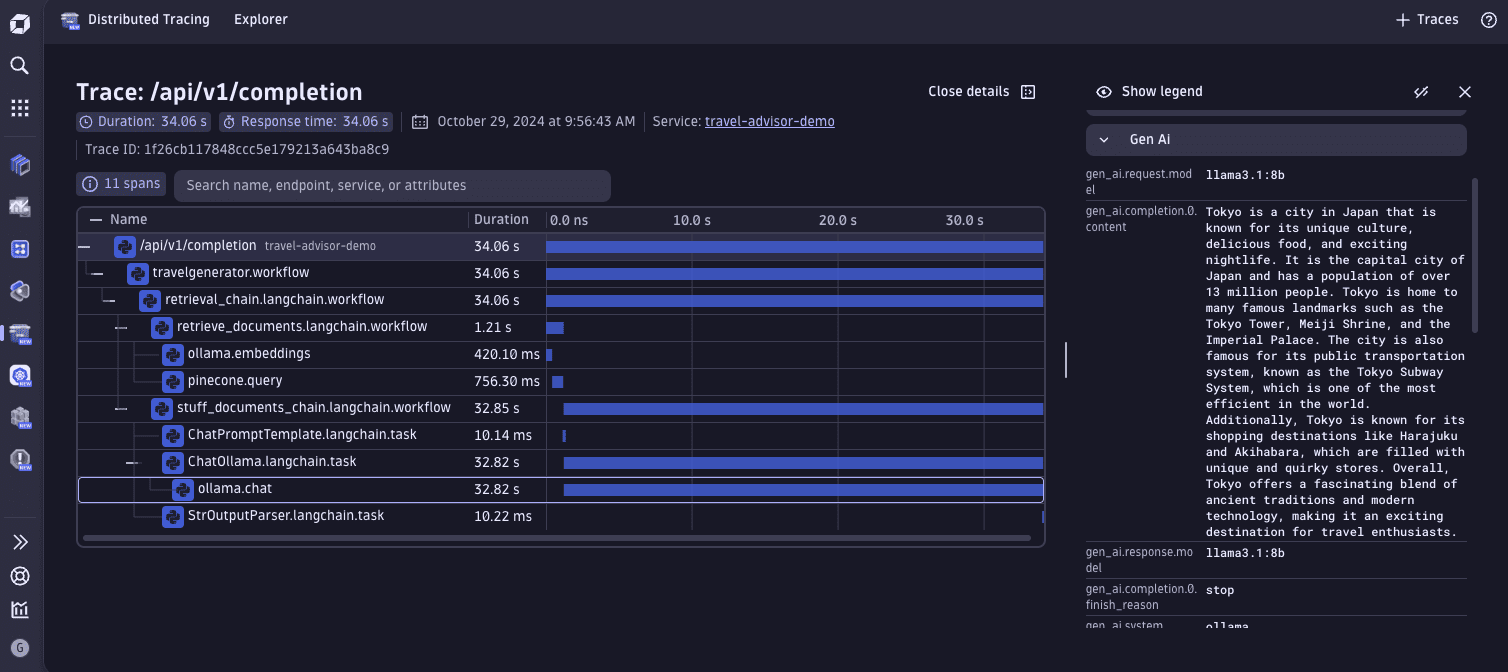

Retrieval-Augmented Generation (RAG)

RAG is an AI technique that enhances the performance of LLMs by retrieving relevant documents or information from external sources. The retrieved data is used to enhance the input to the LLM during text generation.

Unlike traditional LLMs that rely solely on internal training data, RAG leverages real-time information to deliver more accurate, up-to-date, and contextually relevant responses.

Agents

In the context of AI and language models, an agent is an autonomous or semi-autonomous entity designed to perform specific tasks or solve problems.

Agents perform actions on behalf of users, professionals, or other systems, often based on received inputs or objectives. These agents can operate with varying levels of independence and intelligence, making them suitable for complex decision-making tasks, often powered by a language model.

Agentic

An agentic system utilizes intelligent agents that manage or request specific RAG tasks in real-time, enhancing control over the retrieval process.

In an agentic system, several agents work together to address complex queries. These agents assess the relevance of information dynamically, prioritize it, and modify the generation process based on evolving contexts.

Guardrails

Guardrails are runtime controls that help keep AI and Agentic applications safe, compliant, and predictable by detecting and handling unsafe or disallowed behavior (for example, policy-violating content, sensitive data/PII exposure, or abuse patterns). For AI observability, guardrails are critical because they explain why a model interaction was blocked, modified (redacted/truncated), or allowed. This turns a “the model failed” error into an actionable, policy-driven root cause that you can investigate and tune.

We recommend enabling provider-native guardrails as close to the model as possible (for example, Amazon Bedrock guardrails, Azure OpenAI safety filters, and OpenAI safety mechanisms) and emitting their outcomes via OpenTelemetry. OpenLLMetry captures guardrail data on the relevant GenAI spans using the OpenTelemetry GenAI semantic conventions (for example, outcome, category/reason, action taken, and provider/system), and Dynatrace consumes these exposed attributes/metrics through OpenTelemetry ingestion so you can filter and correlate guardrail-triggered requests with latency, token usage, and cost, and build dashboards/alerts on trends like rising blocks or redactions.

Instrumentation

Instrumentation is the process of adding observability code to an application.

Dynatrace uses OpenLLMetry, an instrumentation library based on OpenTelemetry. OpenLLMetry automatically registers an OpenTelemetry SDK and a list of instruments for popular GenAI frameworks, models, and vector databases.

To learn how to configure OpenLLMetry in your application, see the Get started page.

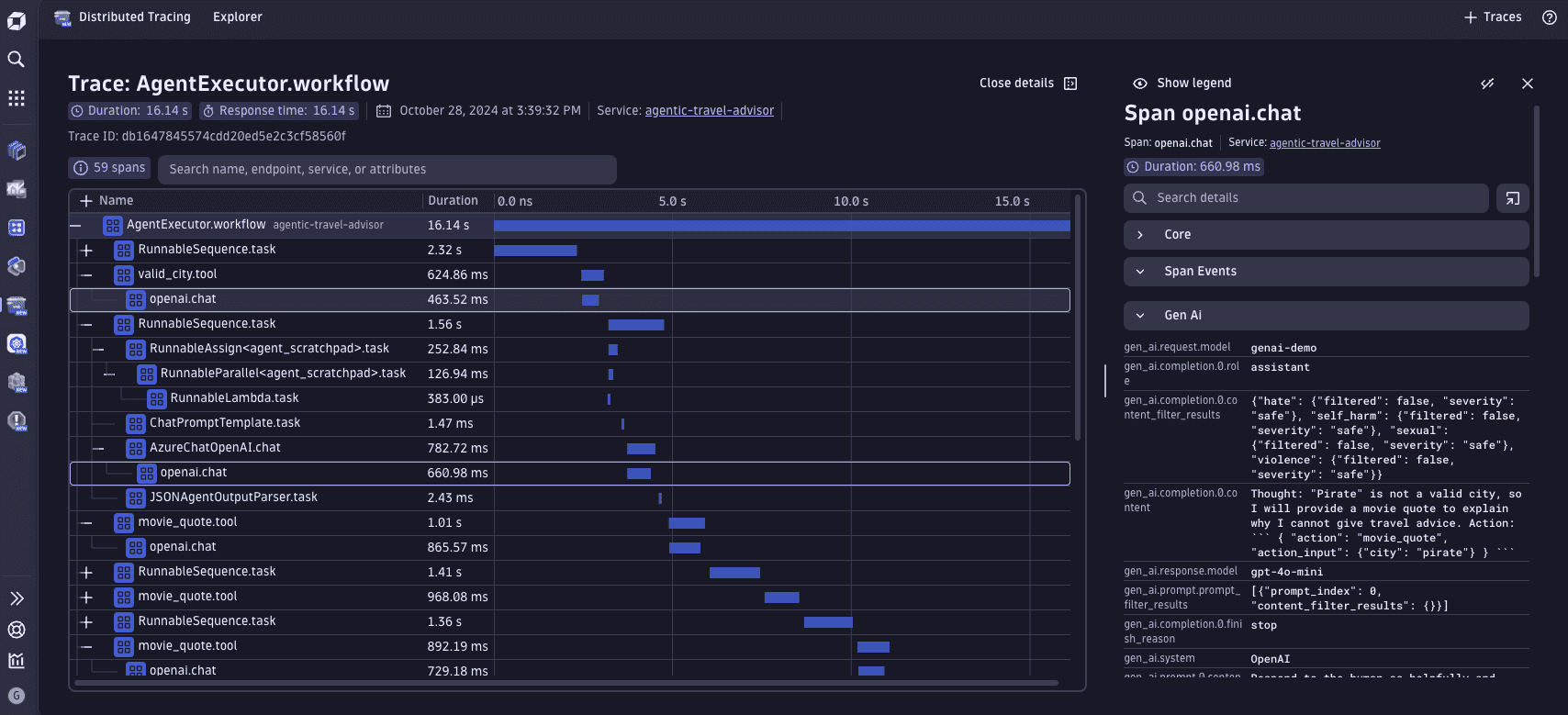

Traces

A trace describes a user request and all the operations performed to satisfy it. We can analyze the steps relevant to observing AI/ML workloads.

Traces help bring visibility into complex workflows, providing information about costs, performance, and insights into the quality of the generated output in the context of AI/ML workloads.

It's common to have LLM applications with complex and autonomous logic in which a model makes the decision. We can leverage traces to understand how requests propagate across RAG or agentic pipelines and see the details of each step that was executed.

Each action performed in a trace is stored as a span. The span attributes contain information relevant to AI/ML workloads (such as token costs for the operation, and input and output prompts). OpenLLMetry follows the OpenTelemetry Semantic Conventions for GenAI, so it's easy to find the relevant attribute keys.

The Distributed traces concepts page explains the trace concept in more detail.

Agentic trace screenshot

RAG trace screenshot

Traceloop span kind

Traceloop marks spans that belong to an LLM framework with a particular attribute, traceloop.span.kind. This attribute helps to organize and understand the structure of your application's traces, making it easier to analyze and debug complex LLM-based systems.

The traceloop.span.kind attribute can have one of four possible values:

workflows: Represents a high-level process or chain of operations.task: Denotes a specific operation or step within a workflow.agent: Indicates an autonomous component that can make decisions or perform actions.tool: Represents a utility or function used within the application.

OpenTelemetry GenAI semantic conventions

The OpenTelemetry GenAI semantic conventions standardize the attributes captured for generative AI operations.

Common attributes

| Attribute | Description | Example |

|---|---|---|

gen_ai.operation.name | The name of the operation being performed | chat, embeddings, invoke_agent |

gen_ai.provider.name | The GenAI provider | openai, anthropic, aws.bedrock |

gen_ai.request.model | The model used for the request | gpt-5.2 |

gen_ai.response.model | The model that generated the response | gpt-5.2-0613 |

gen_ai.usage.input_tokens | Number of tokens in the prompt | 100 |

gen_ai.usage.output_tokens | Number of tokens in the completion | 180 |

gen_ai.request.max_tokens | Maximum tokens requested | 100 |

gen_ai.response.id | The unique identifier for the completion | chatcmpl-123 |

gen_ai.response.finish_reasons | Reasons the model stopped generating | ["stop"] |

gen_ai.conversation.id | Unique identifier for the conversation | conv_5j66UpCpwteGg4YSxUnt7lPY |

gen_ai.request.temperature | Temperature parameter for the request | 0.7 |

gen_ai.request.top_p | The top_p sampling setting | 1.0 |

Metrics

OpenTelemetry defines standard metrics for GenAI operations:

| Metric | Description |

|---|---|

gen_ai.client.token.usage | Token usage by input/output type |

gen_ai.client.operation.duration | Duration of GenAI operations |

These conventions ensure consistent data across different AI providers, making it easier to compare performance and costs.

Agent span attributes

For AI agents, additional attributes are available. See GenAI agent spans for the full specification.

| Attribute | Description | Example |

|---|---|---|

gen_ai.agent.id | The unique identifier of the agent | assist_agent_5j66UpCpwteGg4YSxUnt7lPY |

gen_ai.agent.name | Human-readable name of the agent | Supervisor, FAQ Agent |

gen_ai.agent.description | Free-form description of the agent | Orchestrates agents for flight details |

gen_ai.data_source.id | The data source identifier for RAG | H7STPQYOND |

gen_ai.output.type | The content type requested | text, json, image |

gen_ai.system_instructions | System message or instructions | JSON array of instructions |

gen_ai.tool.definitions | Tool definitions available to the agent | JSON array of tools |

gen_ai.input.messages | Chat history provided to the model | JSON array of messages |

gen_ai.output.messages | Messages returned by the model | JSON array of responses |

OpenAI-specific attributes

When using OpenAI, set gen_ai.provider.name to openai. See OpenAI semantic conventions for details.

| Attribute | Description | Example |

|---|---|---|

openai.request.service_tier | The service tier requested | auto, default |

openai.response.service_tier | The service tier used for the response | scale, default |

openai.response.system_fingerprint | Fingerprint to track environment changes | fp_44709d6fcb |

AWS Bedrock and AgentCore specific attributes

When using AWS Bedrock, set gen_ai.provider.name to aws.bedrock. See AWS Bedrock semantic conventions for details.

| Attribute | Description | Example |

|---|---|---|

aws.bedrock.guardrail.id | The unique identifier of the AWS Bedrock Guardrail. A guardrail helps safeguard and prevent unwanted behavior from model responses | sgi5gkybzqak |

aws.bedrock.knowledge_base.id | The unique identifier of the AWS Bedrock Knowledge base used for RAG | XFWUPB9PAW |

Azure AI Foundry and Inference-specific attributes

When using Azure AI Inference, set gen_ai.provider.name to azure.ai.inference. See Azure AI Inference semantic conventions for details.

| Attribute | Description | Example |

|---|---|---|

azure.resource_provider.namespace | Azure Resource Provider Namespace as recognized by the client | Microsoft.CognitiveServices |